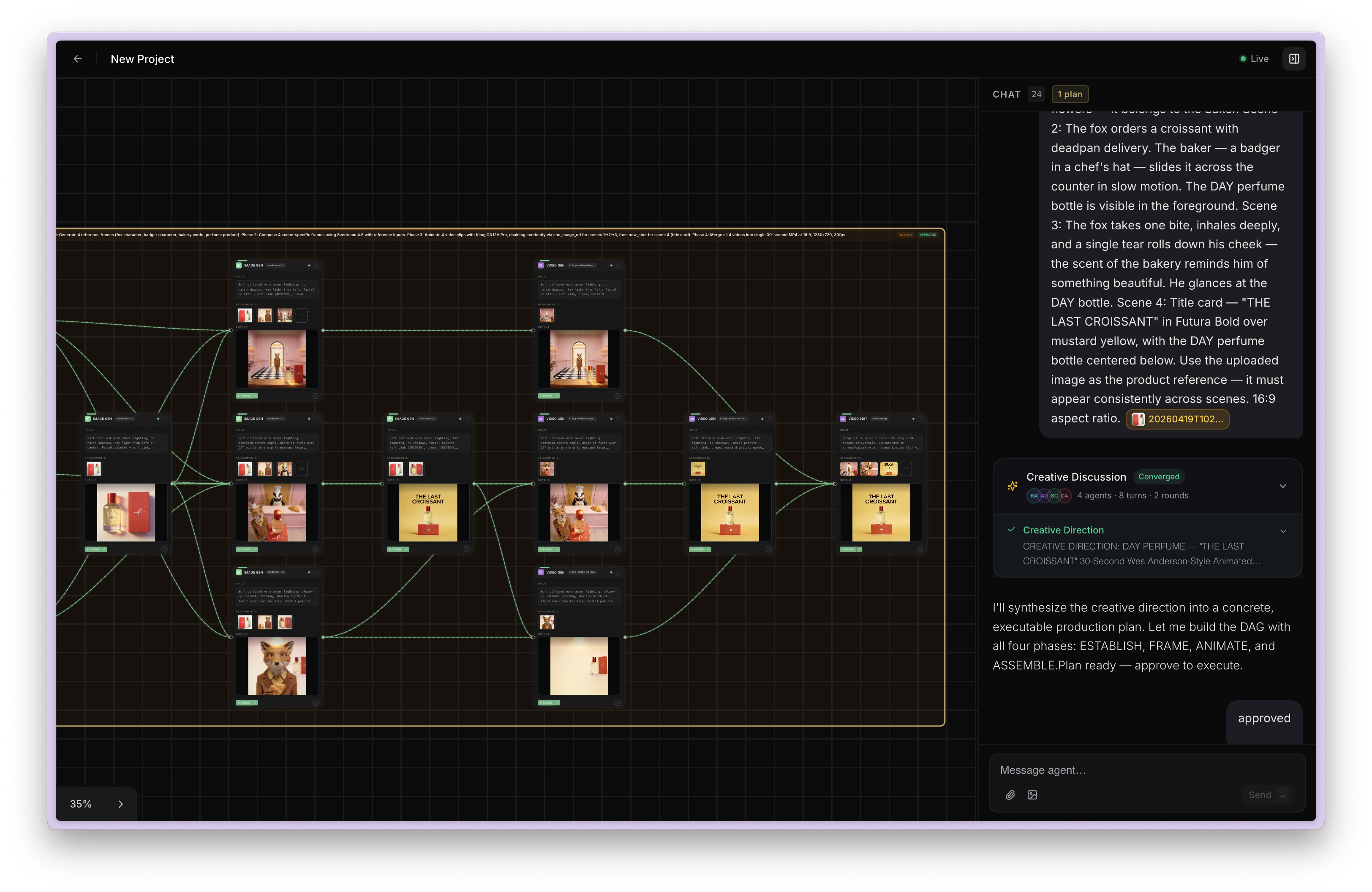

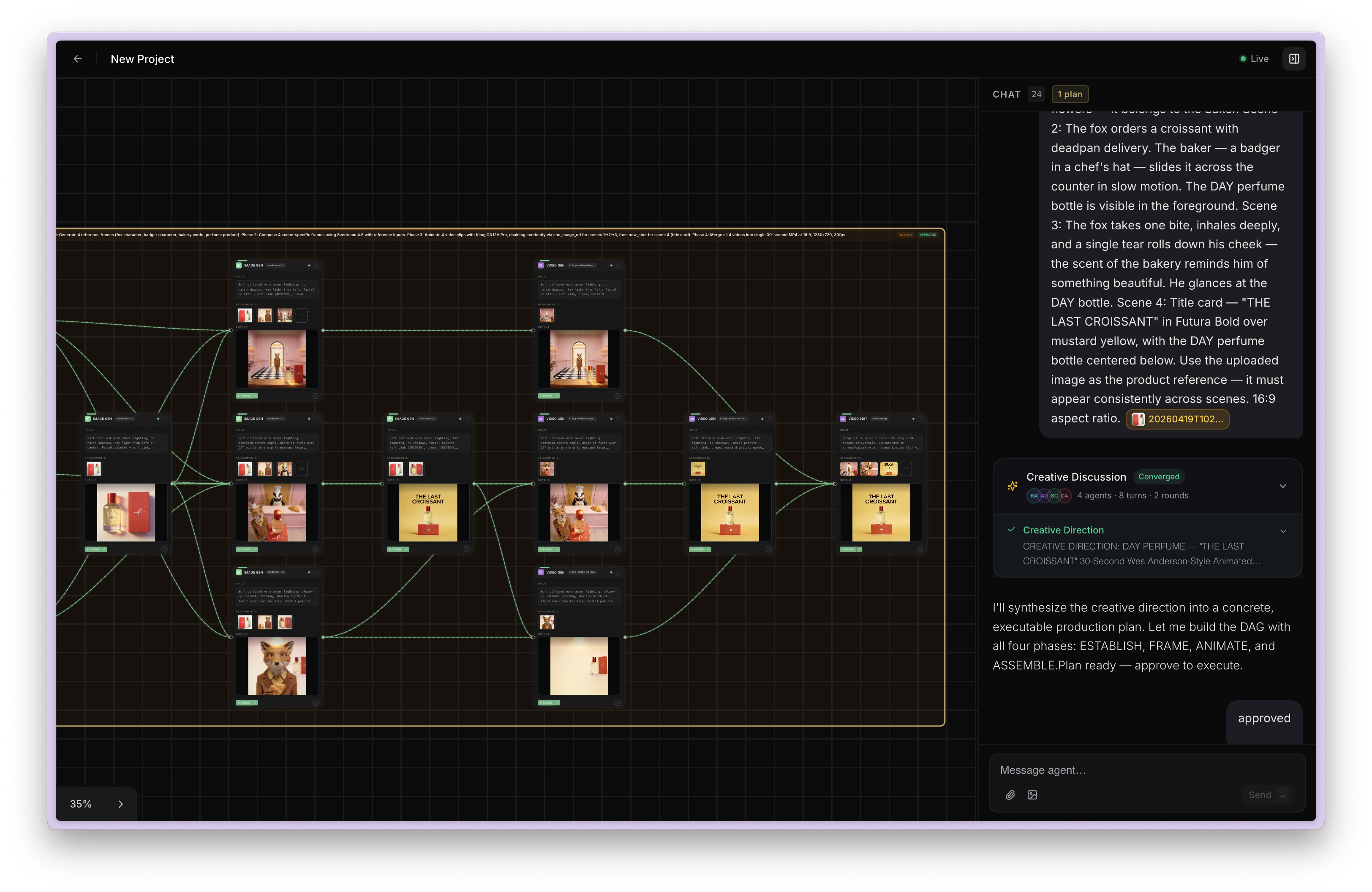

agentic canvas

A four-stage multi-agent system that converts a natural language prompt into an executed creative workflow. Each stage is a specialized LLM agent.

A four-stage multi-agent system that converts a natural language prompt into an executed creative workflow. Each stage is a specialized LLM agent.

The planner operates in two modes. In full mode, the model catalog is injected into the LLM's context and it picks models and fills input parameters directly. In subagent mode, the planner generates a minimal DAG — just step descriptions, agent types, and dependencies — and defers model selection to a separate agent downstream. Subagent mode keeps the planner's context window lean, which matters when the catalog is large.

Both modes stream output progressively — the frontend starts rendering the plan while the LLM is still generating it. The stream carries both reasoning tokens (the model's thought process, shown as a thinking indicator) and content tokens (the JSON plan itself). If streaming fails or yields nothing, the system falls back to a non-streaming call transparently.

Before the planner runs, a capability summary is built from the model catalog — a compact list of which agent types are actually available, with max resolution and duration hints. This is cached with a short TTL and injected into the planner's instructions, so it never generates steps for capabilities that don't exist in the catalog (e.g., planning a 3D step when no 3D models are active).

An Edit Planner handles modifications to existing plans. When a user tweaks a plan, the edit planner receives the current steps, completed outputs, and the user's modification instruction. It returns a revised plan where unchanged steps are marked as such — the system merges these with the originals to preserve their full model and field data. If the edit planner accidentally drops a step, it's re-added automatically in its original position.

The validator uses a smaller, cheaper model since its job is relatively simple: check the plan for structural issues, not generate content. If it returns REVISE, the issues are formatted as an edit instruction and sent back to the Edit Planner — which fixes them and returns a revised plan. This loops iteratively until the plan passes or a round limit is hit, at which point the system proceeds with the best plan it has rather than blocking the user.

The field verifier solves a different problem: the gap between what the planner intended and what the model API actually accepts. This is a hybrid LLM + deterministic system. The LLM pass maps uploaded files to correct input fields, validates enum values, and normalizes array types. The deterministic pass then runs structural checks that don't need an LLM:

All agent prompts — planner, edit planner, validator, verifier, model selector — are stored in the database and loaded at runtime. This makes them hot-swappable without code deploys, enabling A/B testing of different instruction strategies in production.

The naive approach would be to select models for all steps in parallel. The problem: if step 2 depends on step 1's output, the model selector for step 2 needs to know which model step 1 will use — because a video model that accepts 2048x2048 input is a different pick than one capped at 720p.

The solution is layer-by-layer topological traversal. Steps are sorted into dependency layers. Within each layer, model selection LLM calls run in parallel. But the system waits for a layer to complete before starting the next — so downstream layers get the actual upstream model selection, not a guess. Each selection includes output hints inferred from the chosen model's schema, so downstream picks are compatibility-aware.

Steps that don't need LLM selection are pre-resolved deterministically: text steps (no model needed), utility steps (deterministic routing based on description keywords). The catalog is fetched in a single batch query to avoid N+1 DB calls during enrichment.

The executor uses Kahn's algorithm — in-degree tracking with an adjacency list of dependents. All steps with zero in-degree are launched as concurrent async tasks. When a task completes, its dependents' in-degrees are decremented, and newly-ready steps are added to the next batch. This naturally maximizes parallelism without ever violating dependency order.

Dependency wiring is fully automatic. When a step completes, its output is classified by media type (image, video, audio) and injected into the downstream step's input fields by introspecting the target model's schema. The system handles both array fields (e.g., a merge model that takes multiple URLs) and scalar fields transparently. Field name mappings are discovered from model schemas at startup, so it works even when different providers use different field names for the same concept.

Each step's result streams to the frontend in real-time via Firestore — users see outputs appearing incrementally as each node completes. Both Firestore (real-time frontend sync) and MongoDB (durable persistence) are updated on every step completion. For long-running jobs (video generation, lip-sync), the executor polls for completion with awareness of external cancellation — if a user cancels mid-pipeline, running steps are terminated gracefully.

The planner is stateless — it takes a prompt and outputs a DAG. But it lives inside a multi-turn chat with history, uploaded files, and previous generations. A user says "now make that image into a video" — and "that image" refers to something from 5 messages ago.

Before calling either planner, a context window is assembled: conversation history, file references with their storage URLs (labeled by media type), and previous workflow outputs. Uploaded files are collected across the entire session, not just the current message, so multi-turn file references resolve correctly. This context is injected into the planner prompt so it can resolve anaphoric references ("that image", "the video from before") to actual asset URLs.

Session state is structured around plans — each plan has its steps, step results, approval status, and a run history. When a plan is fully rerun, the current results are archived into the run history before execution restarts, so users can compare across iterations. Generated outputs feed back into the chat as assistant messages, maintaining conversational continuity across plan boundaries.

The filmmaking module — co-developed with Microsoft — decomposes high-level episode briefs into a production-ready hierarchy: Episodes → Scenes → Shots. Each shot carries its own magnification (CU, MCU, WS, LS), action description, composition reference, and explicit links to character, location, and prop assets. A state machine tracks every entity through its lifecycle — from creation through asset readiness, generation, review, and final approval.

Character consistency is enforced through reference-based generation — the same turnaround sheet is passed to every shot featuring that character, with the prompt explicitly instructing the model to match facial features, clothing, and build. Shots within the same scene share location and character assets, enforcing visual continuity across sequential frames.

Bulk generation runs through GCP Cloud Tasks — hundreds of shots queued and dispatched asynchronously. Generated assets pass through iterative review cycles with conversational refinement and visual markup. Quality gates enforce approval before downstream use. This pipeline generated the Mahabharat series currently streaming on JioHotstar.

Every AI job — from the Agentic Canvas, episodic pipeline, or direct API — routes through a single Inference Gateway. Jobs are enqueued per-org with priority (HIGH / NORMAL / LOW) and dispatched using Weighted Round Robin across 81+ models on 8 provider adapters (Replicate, FAL, and more) plus an internal H100 GPU fleet. Before dispatch, four concurrency levels are checked: org global cap, org per-API cap, provider API cap, provider global cap. Provider-level uses Redis slot-based concurrency with TTL — slots self-heal on crash.

Each job submission carries an idempotency key — duplicate submissions (network retries, client bugs) are rejected at the gateway level. Jobs are claimed using lease-based locking: a worker acquires a time-limited lease before processing, preventing double-dispatch even if multiple workers poll the same queue.

Crash recovery: Write-ahead logging before enqueue, four background sweeper workers continuously running — one reclaims expired leases, one detects orphaned jobs (started but never completed), one reconciles state between the queue and the datastore, and one repairs concurrency counters that drifted due to crashes. Failed jobs retry with exponential backoff; exhausted jobs move to a Dead Letter Queue for manual inspection. No job is ever lost or double-dispatched.

Failures can happen at every level of the pipeline — the LLM can truncate a plan, a model can refuse a prompt, a provider can run out of credits. The system is designed so that every agent and every execution step has a fallback path.

Error classification: Raw error messages from 81+ models across 8 providers are wildly inconsistent — each provider has its own format, status codes, and failure modes. A dedicated LLM classifier ingests the raw error and categorizes it: billing, rate limits, outage, content policy, input validation, or unknown. Each category routes to a different alert channel by urgency. Financial errors escalate immediately. On any failure, credits auto-refund — the refund is clamped to prevent negative balances and optionally routes back to the specific project allocation if one exists.

The system uses a dual-write pattern — MongoDB Atlas is the source of truth for all state, while Firestore serves as a real-time notification layer. Every mutation (job completion, credit deduction, message send, canvas step progress) writes to MongoDB first, then fires an async fire-and-forget mirror to Firestore. The frontend subscribes to Firestore snapshots for instant UI updates — credit balances change in real time, canvas steps animate as they complete, chat messages appear without polling.

Firestore writes use merge semantics — partial updates never clobber unrelated fields. Batch operations chunk into groups of 500 to stay within transaction limits. If a Firestore write fails, the system logs it and moves on — the REST API always has the correct state from MongoDB, so the frontend self-heals on the next fetch. This gives us real-time UX without making Firestore a hard dependency.

The system runs across GCP, Azure, and AWS — Azure Container Apps for the backend with horizontal autoscaling, MongoDB Atlas as the primary datastore with connection pooling and retry writes, Redis for caching, coordination, and concurrency slot management, and Firestore for real-time frontend sync.

Agent prompts are stored in the database and hot-swappable without redeploy — allowing A/B testing of planner, validator, and verifier instructions in production. Agent configs also track operational metrics: total invocations, token usage (in/out), average latency, error count, and error rate. This makes it possible to compare prompt variants quantitatively, not just by vibes.

I lead on-call rotation, maintain runbooks, own monitoring and alerting, and handle incident response for the production infrastructure.